Torch sum

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community. Already on Torch sum Sign in to your account.

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community. Already on GitHub? Sign in to your account. This causes nlp sampling to be impossible.

Torch sum

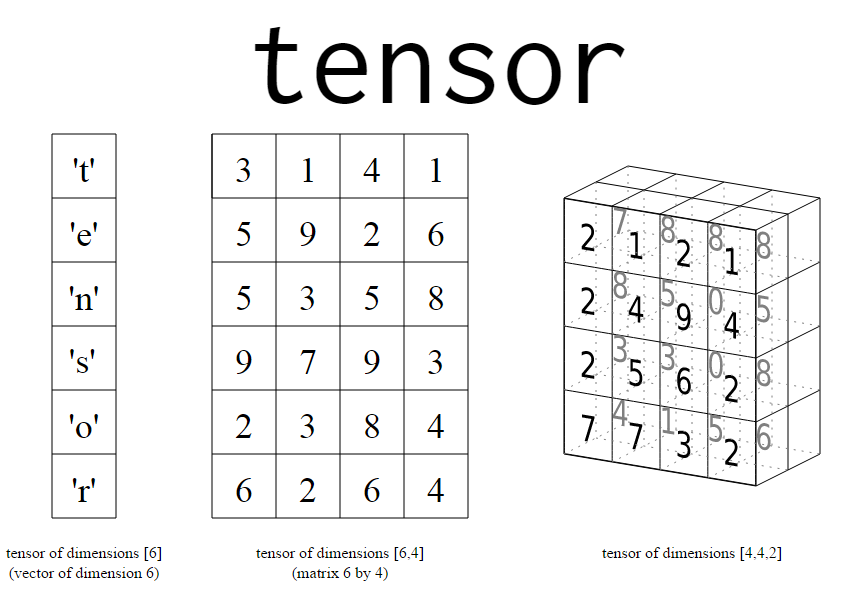

In this tutorial, we will do an in-depth understanding of how to use torch. We will first understand its syntax and then cover its functionalities with various examples and illustrations to make it easy for beginners. The torch sum function is used to sum up the elements inside the tensor in PyTorch along a given dimension or axis. On the surface, this may look like a very easy function but it does not work in an intuitive manner, thus giving headaches to beginners. In this example, torch. Hence the resulting tensor is 1-Dimensional. Again we start by creating a 2-Dimensional tensor of the size 2x2x3 that will be used in subsequent examples of torch sum function. Hence the resulting tensor is a scaler. Hence the resulting tensor is 2-Dimensional. MLK is a knowledge sharing community platform for machine learning enthusiasts, beginners and experts. Let us create a powerful hub together to Make AI Simple for everyone. View all posts. Your email address will not be published. Save my name, email, and website in this browser for the next time I comment.

So it is essentially a CPU or motherboard problem? I tried the following using an ubuntu View all posts.

.

I want to sum them up and backpropagate error. All the errors are single float values and of same scale. So the total loss is the sum of individual losses. To understand this, I crystallized this problem. I wrote this code and it works. Now I want to know how I can make a list of criterions and add them for backpropagation. I tried these, but they don;t work. Note, this is a dummy example. If I understand how to fix this, I can apply that to the recursive neural nets.

Torch sum

In short, if a PyTorch operation supports broadcast, then its Tensor arguments can be automatically expanded to be of equal sizes without making copies of the data. When iterating over the dimension sizes, starting at the trailing dimension, the dimension sizes must either be equal, one of them is 1, or one of them does not exist. If the number of dimensions of x and y are not equal, prepend 1 to the dimensions of the tensor with fewer dimensions to make them equal length. Then, for each dimension size, the resulting dimension size is the max of the sizes of x and y along that dimension. One complication is that in-place operations do not allow the in-place tensor to change shape as a result of the broadcast. Prior versions of PyTorch allowed certain pointwise functions to execute on tensors with different shapes, as long as the number of elements in each tensor was equal. The pointwise operation would then be carried out by viewing each tensor as 1-dimensional. Note that the introduction of broadcasting can cause backwards incompatible changes in the case where two tensors do not have the same shape, but are broadcastable and have the same number of elements. For Example:. Size [4,1] , but now produces a Tensor with size: torch.

Time zone paris

OS: Ubuntu Size [1, 1, 1]. Sorry, something went wrong. This should have just landed in Is that possible you use docker container to see if the problem still exists? Sign up for free to join this conversation on GitHub. Motherboard info dmidecode 3. Complete Tutorial for torch. Can you run the following commands and send out output:. Versions Collecting environment information Related Posts Complete Tutorial for torch. Thanks for the question and suggestion. Update: When pulling the docker image, I unfortunately run into disk space issues.

In this tutorial, we will do an in-depth understanding of how to use torch.

Leave a Reply Cancel reply Your email address will not be published. I'm running under ESXi 8, Ubuntu Sparse tensors Done. Size [3]. Support sum on a sparse COO tensor. The issue is fixed by Table of Contents. The reason I am asking is the leileichan reported the container is good. Jump to bottom. I was able to make torch.

I apologise, but, in my opinion, you are not right. I am assured. Write to me in PM, we will communicate.

Excuse for that I interfere � To me this situation is familiar. Write here or in PM.

You are not right. I am assured.